Inside GPT-OSS: Open-Weight Reasoning Models Built for Agentic AI

GPT-OSS-20B and GPT-OSS-120B

GPT-OSS refers to a pair of open-weight reasoning models, released under the Apache 2.0 license. Accessible here: gpt-oss-20b; gpt-oss-120b. Unlike proprietary ChatGPT, GPT-OSS is designed to be run, modified, and fine-tuned by developers. The models are optimized for agentic workflows, long-form reasoning, tool use, and structured outputs.

This article provides a practical overview and insight into GPT-OSS, its architecture, training pipeline, reasoning abilities, and how it fits in today’s context.

GPT-OSS is much more flexible than ChatGPT

Open-weight and flexible, allowing for tweaking and experimentation.

Designed for agentic workflows (Python and web search)

Capable of long CoT (chain of thought)

Customizable by developers

As of today, OpenAI operates ChatGPT, one of the most widely used and influential public conversational AI models. The company releasing an open-source model available to the public gains a lot of attention in both academia and industry. However, it is clear that GPT-OSS has a very different safety profile than OpenAI’s flagship product and API endpoints. This difference is not due to weaker training, but rather because open-weight models cannot rely on centralized, system-level safeguards once they are released. GPT-OSS-20B model is meant to be used on regular consumer hardware with a minimum of 16GB main memory, whereas GPT-OSS-120B is meant to be used on servers. While one can use ChatGPT out-of-the-box, GPT-OSS requires some basic coding to get it running. I personally find that writing a small script in Python does the job quickly and efficiently. Otherwise, to get a feel for how the model responds, HuggingFace provides an inference option on the model landing page. However, this is a freemium option and may be discontinued in the future.

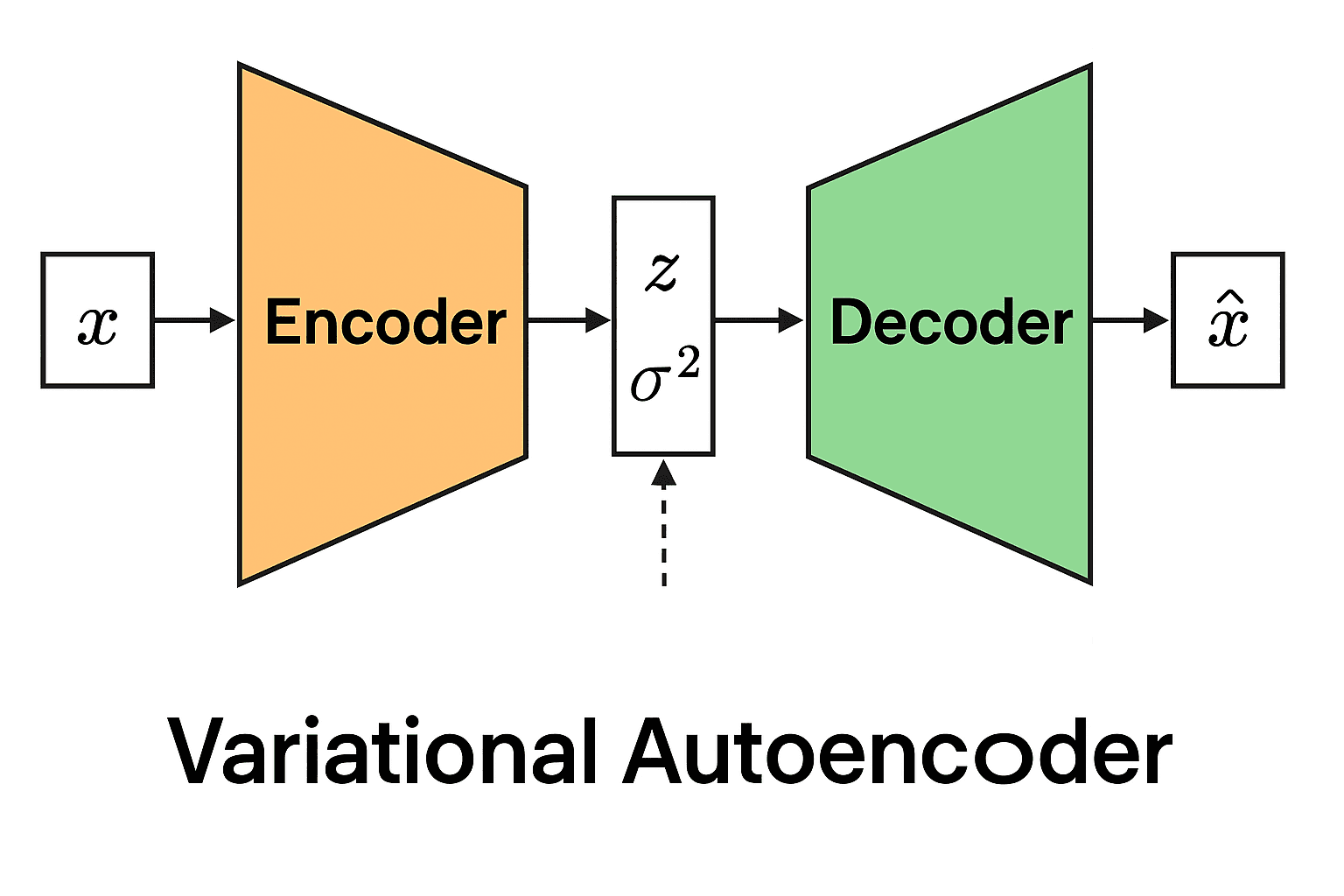

Architecture Overview

GPT-OSS models are autoregressive Mixture-of-Experts (MoE) transformers built on architectural ideas from GPT-2 and GPT-3.

Model Sizes

GPT-OSS-120B: 116.8B total parameters, 36 layers, 5.1B active parameters per token.

GPT-OSS-20B: 20.9B total parameters, 24 layers, 3.6B active parameters per token.

For enabling efficient inference, only a subset of experts is active per token, even though the total number of parameter count are large.

Quantization and Hardware Efficiency

GPT-OSS applies post-training quantization to MoE weights using the MXFP4 format (4.25 bits per parameter). This helps reduce the memory footprint, and users do not need to have access to a large server farm to infer on the model. Thus, this enables:

GPT-OSS-120B to be able to run on an 80GB GPU

GPT-OSS-20B to be able to run with only 16GB of memory

Apparently, even with lower computing resources, users can infer models locally. Also, potentially secure network use-cases like handling payslips, banking documents, and medical information can be summarized using the local model without risk of any data leakage over the internet. Without quantization, individual researchers, small companies, and even regular users wouldn’t have been able to communicate with models with good features and chat abilities locally.

Tokenizer

TikToken is a library available on GitHub, maintained by OpenAI. It contains o200k_harmony tokenizer, which is a Byte Pair Encoding (BPE) tokenizer extended with special tokens for role-based and channel-based chat formatting. Byte Pair Encoding (BPE) is a method that breaks text into small, reusable pieces so a language model can efficiently understand and generate words, even ones it has never seen before.

Pretraining

Data

GPT-OSS models are trained on a text-only dataset containing trillions of tokens with special emphasis on:

STEM and mathematics

Programming and code

General knowledge

Harmful content, like biosecurity, was filtered using CBRN pretraining filters developed initially for GPT-4o.

Training Infrastructure

Training was conducted on NVIDIA H100 GPUs using PyTorch with expert-optimized Triton kernels and FlashAttention:

GPT-OSS-120B: approximately 2.1M H100 GPU-hours

GPT-OSS-20B: approximately 10x fewer GPU-hours

Post-Training and Reasoning

After pretraining, models were post-trained using CoT (chain of thought) reinforcement learning techniques, in a similar way to how OpenAI’s o3 models were trained. Models are taught:

How to reason step by step?

How to solve complex math and coding problems?

How to use tools such as Python execution and web browsing?

Harmony Chat Format

GPT-OSS uses the harmony chat format, which is a role-based messaging structure with explicit message boundaries like System, Developer, User, and Assistant. Imagine you instruct another person to help you prepare for final exams, just act as an agent who only asks you questions, whereas you are the agent who will just answer. GPT-OSS is trained using a similar system to follow the User-Assistant setting to chat with an human-user.

The format also introduces channels:

analysis: internal chain-of-thought

commentary: tool calls

final: user-visible output

This structure enables advanced agentic behaviors but requires careful handling, especially in multi-turn conversations. One has to keep in mind, user-AI agent role mustn’t be reversed, otherwise the application wouldn’t serve its purpose.

Variable Reasoning Effort

In ChatGPT, one can choose various options like Auto, Instant, Thinking, Pro, and Deep-Research. This helps ChatGPT determine how long the user is willing to wait for a response and how long CoT should apply to get the desired output. Similarly, in GPT-OSS, we have 3 different effort levels:

low

medium

high

These are set via system prompt keywords (e.g., Reasoning: high). Higher levels result in longer chain-of-thought and improved accuracy, at the cost of latency and compute.

Agentic Tool Use

A large language model that is trained on fewer parameters is prone to hallucinations; it is to the benefit of users that the model has the capability to interact with tools. GPT-OSS models are trained to interact with:

Web browsing via search and open

Python code execution in a stateful Jupyter environment

Arbitrary developer-defined functions with schemas.

Tool use can be toggled to be enabled/disabled through the system prompt.

Evaluation Results

Reasoning and Coding

While it is debatable who performs the best in solving complex problems amongst Gemini, Claude AI, ChatGPT, and other public chat models, GPT-OSS, among the offline models, is particularly strong in mathematics and reasoning-heavy tasks. For example, GPT-OSS-20B uses over 20k chain-of-thought tokens per AIME problem on average. Both models perform strongly on coding and tool-use benchmarks, with GPT-OSS-120B approaching the performance of OpenAI’s o4-mini.

Health Performance

GPT-OSS models perform competitively on HealthBench evaluations.

Notably, GPT-OSS-120B approaches OpenAI o3 performance and outperforms several frontier closed models. However, it is important to note that the advice from a large language model is not intended to replace medical professionals.

Multilingual Performance

On MMMLU benchmarks across 14 languages, GPT-OSS-120B at a high reasoning effort setting comes close to o4-mini-high performance.

Safety and Limitations

Even the best of systems have limitations, and so do GPT-OSS models. As it is an open-weight light model, it doesn’t have the same safety standards as OpenAI’s proprietary counterparts and also has other limitations.

Preparedness Framework

GPT-OSS-120B does not reach OpenAI’s “High capability” thresholds under the Preparedness Framework, even after adversarial fine-tuning.

Biological and chemical capability

Cyber capability

AI self-improvement

Disallowed Content and Jailbreaks

On standard disallowed content evaluations, both models perform on par with OpenAI o4-mini. GPT-OSS-20B slightly underperforms on illicit/violent categories but still exceeds GPT-4o. Robustness to jailbreaks using the StrongReject benchmark is comparable to o4-mini.

Instruction Hierarchy

All public chat LLM models should/must follow the instruction hierarchy to behave like a chatbot; otherwise, they would fail to do the bare-minimum itself, chat. GPT-OSS follows system and developer message priorities; it generally underperforms o4-mini on instruction hierarchy tests such as system prompt extraction and prompt injection hijacking.

Chain-of-Thought and Hallucinations

GPT-OSS chains of thought are intentionally unrestricted, thus:

CoT may contain hallucinations

CoT may include unsafe or unfiltered language

Researchers found that when models are explicitly discouraged from expressing certain thoughts, they can instead learn to conceal their reasoning while continuing to behave incorrectly. Developers are advised not to expose raw chain-of-thought to end users, as it may contain hallucinated or unfiltered language that is inappropriate for direct display.

Fairness and Bias

On the BBQ fairness benchmarks, GPT-OSS models perform at roughly the same level as OpenAI o4-mini.

Conclusion

GPT-OSS represents a significant step forward for open-weight reasoning models. It combines long CoT reasoning, agentic tool use, and strong math and coding performance in a form developers can fully control. Training an LLM from scratch is technically infeasible for the majority of individuals because of the massive compute requirement, unless one owns a supercomputer at home or is spending a fortune on cloud infrastructure. Technical freedom and openess shifts the burden of responsibility. OpenAI’s model card and license clearly list GPT-OSS’s shortcomings and where it may fail. Like any other thing in the world, for example, a car can be used both as a transportation medium and a lethal machine when in the hands of a criminal. An LLM has to be used responsibly, especially when the restrictions are lifted. Safety, deployment, and monitoring are no longer handled by a centralized API, but by the system builder. However, for teams building agentic systems, this is not a drop-in replacement for ChatGPT, but a powerful foundation.

References

[1] OpenAI et al., “gpt-oss-120b & gpt-oss-20b Model Card,” Aug. 08, 2025, arXiv: arXiv:2508.10925. doi: 10.48550/arXiv.2508.10925.